On Saturday, December 12 at Spotify’s New York City offices, 150 musicians, computer programmers, artists, scientists, composers, hardware tinkerers, and many others interested in deeply investigating music will gather at the Sound Visualization & Data Sonification Hackathon. The day starts at Noon with presentations by innovators in turning sound into images and numbers into music. Then all attendees are invited to spend the afternoon and evening brainstorming and building creative sound visualization and data sonification projects.

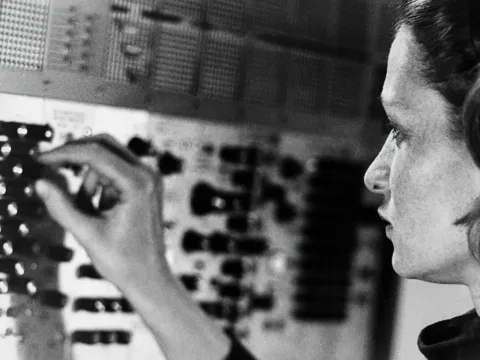

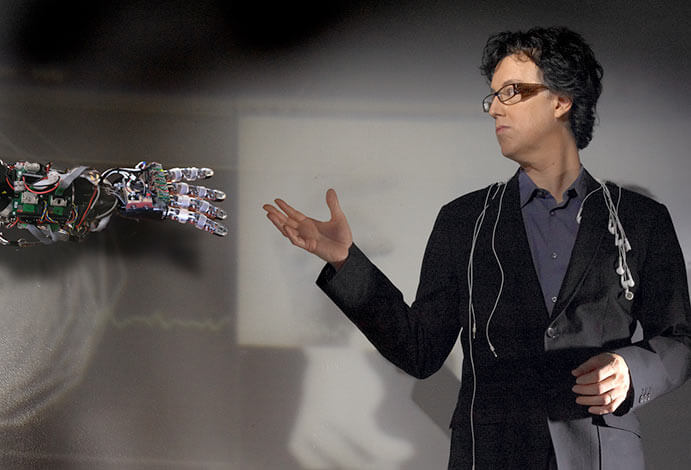

Learn more below about sound visualization and data sonification from folks who will speaking at the event: Composer Jay Alan Zimmerman will talk about data visualizations for the deaf. Product designer Pia Blumenthal will talk about Sonify, a Musical Image Editor. As the Data-Driven DJ, Brian Foo turns open data into open source algorithmic music, and will talk about Track 1, a sonification of income inequality on the NYC subway.

How could music visualization software help people with deafness experience music?

Jay Alan Zimmerman: Imagine going to a classical concert and hearing nothing but static. Or a dance club and only feeling the thump of the beat as people bounce up and down. These exciting and diverse experiences suddenly become the same: boring as hell. Sure there are blinky lights at clubs, passionate players at classical concerts, and many dazzling animations of music visualizations available. But it’s still just visual noise. What I’m working towards is a new visual language of music that is simultaneously complex yet easy to comprehend because it’s connected to our primal understanding of the human visual world: it has depth, texture, and movement everyone is familiar with, no matter what their hearing. If we can combine this kind of imagery with processing that can capture the specificity and nuance of music, and then liberate the visuals from a computer screen — fully integrating them into the live performance space with video-mapping and other techniques — then we can move beyond eye-candy and info-dumps of music data to actually creating a sensory experience as deeply compelling as hearing great music. Wouldn’t everyone like that, hearing and non?

How could music visualization software aid your composition process?

Jay Alan Zimmerman: First understand: the composing part is not the problem. I use a lot of visual tools and software to trigger my music memory, imagination, and “inner ear” in order to create scores. But when I was fully hearing, the music-making process created a wonderful loop: a music idea triggered making a sound, and then instantly that sound aroused my ears, which inspired the next idea, which triggered the next action, and on and on the music circled in a natural evolution, each development building on the previous one. Now the loop is broken. There is no confirmation the idea is good, no pleasure in having made the sound, no new idea in reaction to the pleasure of hearing. No possible way of making an adjustment or improvement if my inner ear doesn’t match reality. If we can create a music visualization program that truly makes sound visible — that can tickle my brain when the sound is beautiful — then I could stop working so hard trying to find out if the girl singing a high D generating a near perfect sine wave at 85 decibels sounds great or like shit. I wouldn’t need to ask a billion questions about what everyone else is hearing so that I can plug it into my mind. The best new aids would be the ones that inform me about sound while making the process more fun and playful again. It’s still fun when I create it in my head, but it’d be nice to not have to think so much.

Does visualization of music change how we hear music?

Pia Blumenthal: Visualizing music in real-time can absolutely impact the way someone experiences listening in both analytical and sensorial ways. Since music is rich in sonic information, visualization gives tangible form to otherwise abstract qualities like pitch, timbre, spatial location and duration. Accompanying visualizations can contribute towards a more active state of listening by calling attention to or reinforcing sonic patterns and structure. And because music is temporal by nature, visualizations can act as a snapshot of what to expect when listening.

Historically, there have been many artists and musicians who have also believed in the emotional impact one medium has on the other–Kandinsky, Scriabin and Messiaen to name a few. Wagner’s concept of Gesamtkunstwerk is a good example as it assumes the idea that all sensory elements can be attuned to a single, profound experience (in his case, the theater). Music visualization presents unified visual and auditory sensations to the beholder, so it, too, may be considered a more complete sensory experience.

How can data sonification be used to interpret data? Is the human auditory system sensitive to different patterns than the human visual system?

Pia Blumenthal: Not only do we hear at a higher resolution than we see–44,100hz compared to the traditional 24fps–but as we listen we can parse multiple streams of information (pitch, timbre, location, duration, source separation, etc.) with no effort. Because of our human ability to identify simultaneous changes in different auditory dimensions, we can integrate them into comprehensive mental images. Sonification can be used to exploit this for the purposes of identifying trends and patterns in large sets of data. And when paired with data visualization, sonification can provide a more holistic approach to exploring information.

Brian Foo: Unlike charts or visualizations, music is abstract and not that great at representing or communicating large sets of data accurately or efficiently. However, music has a few clear advantages. First, it has the ability to evoke emotion and alter mood which can help the listener understand data intuitively and suggest how they should feel about the data rather than just communicate the data itself. Also, music is beneficial to the creator since they can curate a temporal experience for the listener that can be consumed casually and viscerally. In contrast, a visual chart can be navigated in many ways and usually does not impose a particular narrative structure. Lastly, music gets stuck in the listener’s head. If one can attach meaning or data to that music, perhaps those things (e.g. economic issues, environmental issues, personal stories, etc.) will get stuck in the listener’s head as well.

Why is sonification of data the right combination of media for you as an artist?

Brian Foo: My background is primarily in computer science and visual arts, and music composition is brand new for me. I like to use something that I’m comfortable with (data, visualization) to learn something I’m unfamiliar with (music.) I believe thoughtfully combining something like music, that is abstract and expressive, with something that is analytical and concrete, like data science, can create something new that leverages the benefits and offsets the flaws of each practice.

Sound Visualization & Data Sonification Hackathon

Saturday, December 12th, 2015

Noon to 10:00 PM

Spotify NYC

45 W 18th St, 7th Floor

New York, NY 10011

Free. All are welcome: RSVP

Follow @musichackathon on Twitter or Like them on Faceboook at facebook.com/musichackathon.